ElectronicHealthcare

Abstract

Use of electronic medical records (EMRs) is being promoted and funded across Canada. There is a need to consistently assess the use of those EMRs. This paper outlines an EMR adoption framework developed by the University of Victoria's eHealth Observatory. It assesses provider adoption of an EMR in office-based practices across ten functional categories. Assessments across practices can be compared and collated across regions and jurisdictions. A case study is presented in the paper that illustrates how the EMR adoption framework has been used over time with an office to help them assess and improve their EMR use.

Introduction

Canada still lags behind many countries in office-based electronic medical record (EMR) adoption (Schoen et al. 2009). There are several new and ongoing activities at provincial and national levels to spur the adoption of EMRs in medical offices. However, one of the missing pieces is a common framework to assess clinical adoption of the EMR within the office.

Many provinces have funding and support programs to encourage EMR adoption. Alberta has had the longest-running provincially supported EMR adoption program in Canada. British Columbia, Saskatchewan, Manitoba, Ontario and Nova Scotia also have programs. Each program varies in support models, in which EMRs are supported and in overall adoption rates. Many of these programs have an interest in evaluating the impact of the money spent.

In 2010, Canada Health Infoway received $500 million CDN; of this amount, $380 million is to be directed to office EMR adoption (Strasbourg 2010). This stimulus funding is meant to also support functional improvements and data interoperability among EMR products in Canada. Infoway is interested in ensuring systems are adopted in Canada. EMR implementation has been measured upwards of 37% in Canada (Schoen et al. 2009). However, current estimates in Canada are that only 14% of physicians are using EMRs in a clinically meaningful way (Dermer and Morgan 2010). Implementation does not necessarily imply clinical adoption and use.

With provincial and national funding streams spurring EMR adoption, now is an opportune time to develop and use a consistent framework for EMR adoption evaluation across jurisdictions. This paper presents a framework that could be used to help evaluate jurisdictional progress. It was also developed with the goal of aiding practices in improving their own adoption of EMRs by providing formative assessments over time.

We first present the eHealth Observatory's EMR Adoption Framework, and then describe a case study of its use. Our paper concludes with a brief discussion of the future direction of the EMR Adoption Framework.

eHealth Observatory's EMR Adoption Framework

The eHealth Observatory at the University of Victoria developed the EMR Adoption Framework during 2009–2010 to support the formative assessment of physician offices as they adopt EMRs. A growing set of evaluation tools (e.g., interview questions, scoring sheets) are available at the eHealth Observatory's website (see https://ehealth.uvic.ca).

The overall framework (Figure 1) consists of four elements. The first (at the top) is the Overall EMR Use score for a particular clinician (or aggregate score over a practice, community or jurisdiction). The second is the 10 Functional Categories that describe the core functionality of an EMR. The categories can be measured independently and aggregated into the overall use score. EMR Capability is the third element of the framework and describes both the product capability and the specific configuration of the EMR at the clinical site (e.g., some modules of the EMR may or may not be deployed). The fourth element is the Supporting eHealth infrastructure. This includes local/regional/jurisdictional capabilities to transmit information electronically with the EMR.

EMR Adoption Framework: Overall EMR Use

The overall use of the EMR is defined on a scale from 0 to 5 and is consistent with HIMSS Analytics (Healthcare Information and Management Systems Society). HIMSS is known for its evaluation of IT adoption in hospitals in the United States and Canada. They have developed a similar approach to ambulatory EMR use (HIMSS 2009). Table 1 provides a summary of the overall features of each level, from 0 to 5, in the EMR Adoption Framework.

| TABLE 1. Stages of EMR adoption, consistent with HIMSS Analytics | |

| Srage | Description |

| 5 | Full EMR that is interconnected with regional/community hospitals, other practices, labs and pharmacists for collaborative care. Proactive and automated outreach to patients (e.g., chronic disease management). EMR supports clinical research. |

| 4 | Advanced clinical decision support in use, including practice level reporting. Structured messaging between providers occurring within the office/clinic. |

| 3 | Computer has replaced paper chart. Laboratory data is imported in structured form. Some level of basic decision support, but the EMR is primarily used as an electronic paper record. |

| 2 | Partial use of computers at point of care for recording patient information. May leverage scheduling/billing system to document reasons for visit and be able to pull up simple reports. |

| 1 | Electronic reference material, but still paper charting. If transcription used, notes may be saved in free-text/word processing files. |

| 0 | Traditional paper-based practice. Charts on paper, results received on paper. May have localized computerized billing and/or scheduling, but this is not used for clinical purposes. |

An overall self-ranking can be quickly completed based on these descriptions alone, but a richer understanding can be developed by individually assessing provider use of the EMR across the functional categories, below.

EMR Adoption Framework: Functional Categories

To better understand and provide feedback on EMR use, ten functional categories of EMR features can be independently measured and scored with a ranking from 0 (paper) to 5 (fully integrated electronic record), keeping consistency with the overall score. The ten functional categories were adapted from an Institute of Medicine report to the Department of Health and Human Services that "provide[d] guidance on the key care delivery-related capabilities of an electronic health record system" (Tang 2003: 1). Based on our experience, we revised two categories (order entry and results management) to four (prescribing, laboratory, diagnostic imaging and referrals). This change better reflects independent groups of functionality in ambulatory care that were clearer to the providers. Definitions for each functional category are provided in Table 2. The eHealth Observatory has developed and continues to refine a set of 30 interview questions and a scoring guide to assess adoption across these functional categories. Together, the categories describe the core functionality of an EMR.

| TABLE 2. Description EMR functional categories | |

| Functional category | Description |

| Health information | Health information describes the patient information that is input into the EMR. Medical summary data are included (such as problem lists, past medical and surgical history, and allergies); also, clinical documentation, such as documenting progress notes, vital signs. At Level 3, this data is all captured in the EMR, at Level 4, this is structured and by Level 5 this data is appropriately shared with others. |

| Laboratory management | Laboratory management includes all phases in the laboratory workflow, from ordering tests to reviewing results from external laboratories. A practice may be dependent on laboratories for some functionality, and there may not be electronic feeds from all laboratories. This is taken into account in the scoring system in the interview tool. |

| Diagnostic imaging | Diagnostic imaging (DI) management evaluates use of EMR throughout the DI workflow, from ordering to receiving results. Like laboratory management, the provider/office may be dependent on multiple facilities. |

| Prescription management | Prescription management captures activities from prescribing to renewal processing in the office. Higher-level functions (e.g., Level 5) explore e-Prescribing (transmitting to pharmacy electronically). Drug interaction checking is also included in this category. |

| Referrals | Referrals includes both office-generated referral activities and incoming referrals (for specialists). Electronic transmission/receipt of referrals is considered at Level 5. |

| Decision support | Clinical decision support focuses on point-of-care reminders as well as chronic disease management tools related to individual patients. This is complemented by the practice reporting category. |

| Electronic communication | Electronic communication examines how the EMR is used to support communication activities between providers (including staff). Assessment includes if electronic communication can be linked into patients' EMR records (Level 4) and if the tools support communicating with providers outside of the office (Level 5). |

| Patient support | Patient support examines how the clinician uses the EMR to engage the patient in his or her health. For example, how is the clinician using the EMR to help select relevant handouts for the patient? Is there a secure web portal or data sharing with personal health records? |

| Administrative processes | Administrative processes are focused on scheduling, billing and management of paper (e.g., scanning). This includes outside access to scheduling (e.g., direct patient booking). |

| Practice reporting | Practice reporting examines how clinicians use the data in their EMRs at the point of reflection, that is, when they take time to reflect on their practices as a whole. Activities considered include using pre-built reports in the EMR, using custom reports (Level 4) and linking or sharing to external repositories for queries (Level 5). Also in this section we consider if the practice is using its EMRs for additional research. |

EMR Adoption Framework: EMR Capability

EMR capability is defined as the capability of the EMR as provided by the vendor and configured at the site. Unlike assessment of functional categories, EMR capability is independent of the actual use by the provider. This is what the EMR could do, if used to its full potential. It can be determined prior to assessing a provider or clinic's level of EMR adoption. The EMR capability will limit the provider's potential level of adoption. While it is not necessary to evaluate EMR capability, understanding it can help focus feedback and set expectations on what is possible with the EMR.

EMR Adoption Framework: Supporting eHealth Infrastructure

Supporting infrastructure is the local, regional and jurisdictional health IT infrastructure that the EMR can connect with to receive and transmit data to support care delivery. For example, regional hospitals may have a standard electronic interface for laboratory results distribution to physician EMRs; there may be provincial repositories and registries that the EMR can connect to; there may be a health information exchange. As with EMR capability, this supporting infrastructure is useful to understand as it can limit potential adoption in various functional categories. For example, at the time of writing, British Columbia did not have an e-Prescribing infrastructure; thus it would not be possible for a clinician to e-Prescribe (Level 5 of Prescription Management).

What follows is a case study where the EMR Adoption Framework has been used to assess a primary care office as it adopts an EMR.

Methods: Case Study

This case study was performed at a full-service family practice office in rural British Columbia that was implementing an EMR. The office received funding through the BC PITO (Physician IT Office – see www.pito.bc.ca) EMR program and had selected a PITO-approved EMR product.

Two site visits occurred, at approximately two months and eight months post-EMR implementation, to assess the EMR adoption. Each site visit was three days in duration. (Note: additional studies not described here were completed during these site visits.)

Prior to the site visits, PITO requirements and EMR user manuals were reviewed to determine expected EMR capability. At each visit, the team completed six detailed EMR adoption interviews (with doctors and office staff) to assess level of adoption. Each interview was completed within 30 to 60 minutes. Findings were tabulated on-site and feedback was given to a larger group of ten clinic members at the conclusion of each site visit in a two-hour group discussion. The EMR Adoption Framework and scores were used to focus the discussion in a participatory manner on how the office might improve their adoption of their EMR.

Results: Case Study

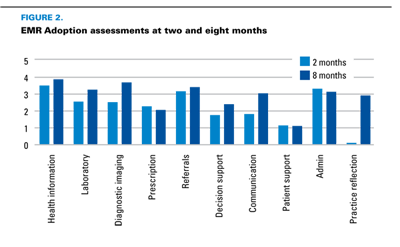

The results for the case study are summarized in Figure 2, which shows scores for each functional category, aggregated for the whole office, at two and eight months post-implementation. The office's overall EMR adoption score increased from 2.17 to 2.87 (out of 5) over the six-month period, showing that they were successfully transitioning from a paper practice to an electronic record. However, adoption and improvements were not equal across functional categories.

At the first visit, the clinic was very much getting used to even the very basic aspects of the EMR. While everyone had EMR access, use varied by clinician. Some had jumped into using the system completely, while others were still making the transition from writing notes on paper. Most were migrating health summary data into the EMR, with the help of additional staff. At the second visit, all patient encounters were being documented electronically, and some of the higher-level features in the EMR, such as reminders and recalls, were being used. Several specific scores are worth highlighting.

Scores for laboratory management and diagnostic imaging increased between the two visits as clinicians were more regularly using the EMR's built-in order capacity to order investigations, and external facilities were accepting the EMR printed requisitions. The EMR was able to receive most results electronically.

The prescribing score remained low for several reasons: first, not all prescriptions were being documented in the EMR; some renewal workflows were still on paper. Second, the EMR did not have the capability for drug interaction alerts. Thus, the clinic was not able to achieve higher marks in prescription management because their EMR as installed did not support this functionality (the ceiling effect).

Decision support increased as clinicians more consistently used reminder and recall features in the EMR. Electronic communication increased as several clinicians shifted from using sticky notes to communicate with staff to relying on the EMR to record communications. The largest shift in adoption was in practice reflection. At the first site visit, the clinic had not run any form of practice level reports (scoring 0). This was discussed at the first focus group. The clinic was encouraged to run reports to confirm quality of data being input into the EMR. By the second visit, every physician had run at least one report on their practice, and some were running reports regularly.

Over the six-month study, it was clear that the clinic was advancing in their adoption. At the first visit, the clinic was focused on getting familiar with the EMR and using the basic EMR to record visits. One clinician would "take days off" from the EMR and go back to paper charting for a day of rest. Several features were not being used at all. However, by the second visit, all providers were using the EMR for all visits. Paper charts were not used, except for historical reference. Some clinicians were using more advanced tools when they had time. They were beginning to change their clinical behaviours, using the EMR as more than a passive record, to help actively manage patients (e.g., using reminders). Over time, it is expected that their adoption scores will continue to increase.

Discussion

In this paper, we have presented a framework for EMR adoption that can be used to assess the use of an EMR in office-based practices. We have found that we can also track changes in adoption over time. This framework appears to be useful for both providing feedback to and eliciting it from clinicians and office staff on EMR use and further adoption. Using it as formative feedback highlighted areas for providers to focus on that they might have been unaware of during the busy days of office practice.

As a tool to assess overall adoption across a region or jurisdiction, this framework provides a reasonable structure that can be used to compare adoption levels. The framework is generic enough that we expect it could be used across jurisdictions, providing consistency in assessment. In comparison to "meaningful use" in the United States, there is considerable overlap in terms of the categories considered (Blumenthal and Tavenner 2010). Our approach differs from "meaningful use" in that we ask participants to describe their current state instead of setting a specific bar that they must reach. The Commonwealth Fund survey of primary care (Schoen et al. 2009) also assessed EMR adoption. The information we assess, within the questions of the EMR Adoption Framework interview tool, is relatively consistent with the questions asked in the Commonwealth Fund's 2009 survey. We cover similar EMR-related functionality; however, our questions tend to be broader, again allowing for a range of scores instead of yes/no-type answers. We ask additional questions related to diagnostic imaging, referrals, administrative functions and patient support. For the purpose of assessing and supporting change at the office level, our EMR Adoption Framework can provide more feedback to the providers. For the purpose of providing funding or for a broad survey, a more prescriptive approach may be easier to implement, as the criteria are more specific and more easily graded; however, there could be misunderstandings in assessing adoption levels. This could potentially explain the difference in adoption between the findings of Dermer and Morgan (2010) and Schoen and colleagues (2009).

There are many reasons why EMR implementations succeed or fail, including system design (usability, functions), project management, procurement and user experience (Ludwick and Doucette 2009). We have seen, through the case study, that the providers perceive benefit from ongoing reflective exercises within the office. These activities show them areas where they can focus, and provide an approach and a forum for discussing if and how they would want to focus on better adoption. In our case study, our sessions constituted one of the few times clinicians and staff had met throughout their implementation specifically to talk and learn, to plan adoption activities, and to discuss features of the EMR that were being used and how they were helping productivity and/or safety.

Since the EMR Adoption Framework's initial development, it has been refined through several iterations. Examples of interview and scoring tools as well as other implementation assessment tools that we have developed or used are freely available at ehealth.uvic.ca.

There are several limitations with the framework. First, it focuses on use of the EMR and does not currently examine any resulting changes in clinical behaviour or clinical outcomes. Use of an EMR is a prerequisite for seeing changes in either behaviour or outcomes and so is a reasonable starting point. Second, the methods and tools provided with the framework (interviews and focus groups) rely on user self-reporting. Comparison to more objective analysis (e.g., usability testing) highlights that users may describe their activities differently or not be aware of some of the distinctions between categories (e.g., they may assume they are documenting coded medications when, in fact, they are recording them as just free text).

Future work will include ongoing refinement of the existing tools as well as development of additional tools to assess more detailed use in each of the functional categories. At the eHealth Observatory some of this work has already begun. There are additional detailed assessment tools for prescription management. These include usability assessment and workflow analysis tools. They use different methods to assess in more detail how providers are using their EMR, and they complement the overall EMR Adoption Framework. The tools provide a more multi-method approach to assessment, which is important to validate perceived use in interviews.

The development of standardized tools to evaluate EMR capability and infrastructure are being considered, as well. Web-based tools are being considered that would allow for the capture, tabulation and anonymized comparison of levels of adoption in regions and across jurisdictions.

It is our hope that this EMR Adoption Framework is useful for both providers implementing EMRs in their offices and for program planners who are considering how to measure adoption of EMRs.

About the Author(s)

Morgan Price, MD, PhD, CCFP, Clinical Associate Professor, Department of Family Practice, UBC, Victoria, BC.

Francis Lau, PhD, Professor and CIHR/Infoway eHealth Chair, School of Health Information Science, University of Victoria, Victoria, BC.

James Lai, MD, CCFP, FCFP, Clinical Associate Professor, Department of Family Practice, UBC, Vancouver, BC.

References

Blumenthal D. and M. Tavenner. 2010. "The 'Meaningful Use' Regulation for Electronic Health Records." New England Journal of Medicine (363)6: 501-4.

Dermer M. and M. Morgan. 2010. "Certification of Primary Care Electronic Medical Records: Lessons Learned from Canada." Journal of Healthcare Information Management: JHIM (24)3: 49-55.

HIMSS. 2009. Stages of Clinical Transformation for Ambulatory Clinics/Physician Offices.<http://www.himssanalytics.org/docs/AmbulatoryEMRAMStages13April09.pdf> Accessed April, 01, 2011.

Ludwick, D. and J. Doucette. 2009. "Adopting Electronic Medical Records in Primary Care: Lessons Learned from Health Information Systems Implementation Experience in Seven Countries." International Journal of Medical Informatics (78)1: 22–31.

Schoen, C., R. Osborn, M. Doty, D. Squires, J. Peugh and S. Applebaum. 2009. "A Survey of Primary Care Physicians in Eleven Countries, 2009: Perspectives on Care, Costs, and Experiences." Health Affairs 28:w1171.

Strasbourg, D. 2010. "Canada Invests $500 Million in Electronic Health Record (EHR) Systems with a Focus on Physicians and Nurse Practitioners across Canada." <https://www.infoway-inforoute.ca/about-infoway/news/news-releases/637> Accessed April 01, 2011.

Tang P. 2003. Key Capabilities of an Electronic Health Record System. Washington, DC: Institute of Medicine Committee on Data Standards for Patient Safety.

Comments

Be the first to comment on this!

Personal Subscriber? Sign In

Note: Please enter a display name. Your email address will not be publically displayed