Healthcare Policy

Assessing the Acceptability of Quality Indicators and Linkages to Payment in Primary Care in Nova Scotia

Abstract

In 2006, the Canadian Institute for Health Information (CIHI) released a comprehensive set of quality indicators (QIs) for primary healthcare (PHC). We explored the acceptability of a subset of these as measures of the technical quality of care and the potential link to payment incentive tools. A modified Delphi approach, based on the RAND consensus panel method, was used with an expert panel composed of PHC providers (family physicians, nurses and nurse practitioners) and decision-makers with no previous experience of "pay for performance." A nine-point Likert scale was used to rate the acceptability of 35 selected CIHI QIs in community practice and the acceptability of a payment mechanism associated with each. QIs rated with disagreement were discussed and re-rated in a face-to-face meeting. The panel rated 19 QIs as "acceptable." Payment incentives associated with these QIs were acceptable for 13. Several factors emerged that were common to the less appealing QIs with respect to payment linkage.

Health indicators are "standardized measures that can be used to measure health status and health system performance and characteristics across different populations, between jurisdictions or over time" (CIHI 2005). An indicator is an evidence- or consensus-based standardized measure that conveys a dimension of health system structure, healthcare process (interpersonal or clinical) or health outcome (Marshall et al. 2003). Indicators can be used to assess performance; monitor health status; provide information for program or policy planning, evaluation and resource allocation; explore equity; track changes over time; identify gaps in health and healthcare (CIHI 2006c); and achieve accountability (CIHI 2005; Committee on Redesigning Health Insurance Performance Measures 2006). They are used as tools for measuring the quality of care in "strategic planning and priority setting, supporting quality improvement and for conveying important health information to the public" (CIHI 2005). Quality-of-care indicators (also called quality indicators [QIs], performance indicators or performance measures) for primary healthcare (PHC) have been developed and subjected to preliminary testing over the past decade in a number of countries worldwide (Engels et al. 2005; Marshall et al. 2003; McGlynn et al. 2003). Large-scale efforts to develop and use QIs as a tool to enhance the quality of care through "pay for performance" have been in use in the United States and, most extensively, in the Quality Outcomes Framework in the United Kingdom (Lester et al. 2006; Roland 2004, 2007).

Unlike other countries, Canada's early stages of PHC quality indicator development and application are only just underway. In Ontario, a panel of primary healthcare practitioners has evaluated and selected performance indicators (Barnsley et al. 2005). Nationally, the Canadian Institute for Health Information (CIHI) released in 2006 a comprehensive set of indicators encompassing all aspects of PHC practice in response to the objectives of the PHC Transition Fund National Evaluation Strategy (CIHI 2006c). As QI development unfolds, broader assessment of the acceptability of specific indicators as measures of quality in practice and the simultaneous assessment of acceptability to practitioners of including the indicators in possible payment strategies need to be explored. Even though comprehensive data sources do not presently exist to calculate many of the CIHI indicators (CIHI 2006c), feasibility work is underway to guide modifications to existing electronic medical records for data capture strategies and sources (CIHI 2006b). We recognize that the identification of quality indicators considered acceptable to providers and decision-makers is only one component of a broad strategy of performance measurement and management.

This paper reports on the first phase of a three-phased, mixed-methods study to assess the acceptability and feasibility of a quality-of-care orientation to primary healthcare. The purpose of phase one was to explore the acceptability of a subset of the CIHI PHC quality indicators that are focused on measuring the quality of clinical care among a combined group of PHC professionals and healthcare policy decision-makers. Acceptability was explored from two dimensions: (1) which of the QIs were most acceptable to the participants as valid measures of quality, and (2) which of the QIs might be considered most acceptable to link to payment incentive tools.

Method

The Pan-Canadian Primary Health Care Indicators were developed and selected in a multi-stage process and formed the basis for this study (CIHI 2006c). We chose indicators for this study from the "quality in PHC" domain, one of eight domains in the full set of indicators. Our research team focused on this set of indicators because we believed it to be the one most relevant to practising clinicians in terms of the focus of their clinical work, unlike others targeted at the organization of care. These indicators are indeed most likely to be found, ultimately, in EMR systems as they mature. We believe these indicators, as also reported by CIHI (2006a), represent the greatest PHC data gap Canadawide and, with the use of newly emerging electronic medical records (EMRs), should become critical tools for QI assessment. Given our plan to test the feasibility of EMRs further to provide data elements for indicator assessment, we wished to reduce the existing 35 QIs in this domain to a ranked set considered acceptable by a multi-professional stakeholder group.

A two-staged modified Delphi/RAND Appropriateness method was employed to assess the acceptability of the subset of 35 CIHI PHC quality indicators. Thirty-five of 38 indicators identified in the Pan-Canadian Primary Health Care Indicator Development Project as indicators of quality in PHC and listed under CIHI Objective 5 – "To deliver high quality and safe primary healthcare service and to promote a culture of quality improvement in primary health care organizations" (CIHI 2006a) – were included. These 35 were not reliant on patient surveys and considered events of enough frequency that practice-level data would be meaningful. Fourteen of these QIs focused on risk assessment/screening/primary prevention/case finding, 16 targeted care for those with established conditions and five tapped the structure and functioning of the PHC organization.

Participants and process

Participants in our expert panel were selected through a search and nomination process, typical of modified Delphi and RAND techniques (Campbell et al. 2002; Campbell and Hacker 2002; Marshall et al. 2003). Nominations of participants were requested from the Nova Scotia College of Family Physicians, Nova Scotia Department of Health, Doctors Nova Scotia, Primary Health Care Information Management Program, primary healthcare nurses and nurse practitioners, community family physicians and research team members. Participants were sought to represent a range of age, gender, geographic settings, and traditional and new collaborative PHC practices.

Nominees who agreed to take part were sent, by courier and e-mail, a survey tool organized by QI, with a proposed measurement definition and several reference materials pertaining to measuring performance and the pros and cons of QIs in PHC. As part of the survey, panelists were asked to rate the acceptability of each indicator as a measure of quality of care within the influence of the scope of PHC and to assess the acceptability of payment potentially linked to each. Panelists were also encouraged to provide written comments about their ratings in terms of relevance to PHC, validity of the indicators and thoughts on issues related to possible payment linkages to indicator achievement.

A nine-point Likert scale, adapted from Marshall and colleagues (2003) and Normand and colleagues (1998), was used to rate the acceptability of each QI in community practice and the acceptability of a potential payment link. An indicator score of 0–3 was deemed not acceptable, 4–6 uncertain acceptability and 7–9 acceptable.

Rating results were tabulated, and substantial disagreement between QIs was identified by first applying an absolute measure and, secondly, a relative measure, as outlined by Normand and colleagues (1998). These were defined and applied as follows:

Absolute measure: Any indicator with an observed range of the overall rating of 8 was considered a "disagreeing" quality indicator (i.e., one panelist gives the QI a 1 and another gives it a 9). After we removed the disagreeing QIs using the absolute measure, the relative measure was applied to those remaining.

Relative measure: For each measure i, the coefficient of variation (CV) across the raters was calculated:

The observed CVi values were ordered from smallest to largest, and measures corresponding to the top 20% of CVi values were considered rated with substantial disagreement.

The second round of the modified Delphi process involved a face-to-face meeting of panel members to discuss QIs that were rated with substantial disagreement. Each member was confidentially provided a copy of his or her own rating for each QI, as well as the location of the member's response relative to the overall distribution of the group. With the help of a moderator, the group discussed each QI where disagreement was evident. After the discussion, participants confidentially re-evaluated these QIs and results were again tabulated. Using the final mean score rank, an ordered list of the 35 QIs was produced, from the most acceptable to unacceptable.

Written comments from panel members were compiled from both stages of the Delphi process and combined with research team members' field notes from the face-to-face meeting. Two of the investigators coded these comments and field notes. From these, common themes relating to the principles that participants felt were relevant to the concept of acceptability (both as a measure of quality and as acceptable to link to a payment strategy) were identified and are discussed below.

Findings

Eighteen people participated in the Delphi survey process: 10 family physicians, five nurses/nurse practitioners and three decision-makers. All healthcare providers were currently in practice. Family physicians were primarily male (70%); nurses/nurse practitioners were all female. The majority (67%) practised in an urban setting and represented a variety of practice types (solo, group, community health centres, academic). Decision-makers represented provincial, regional and professional levels. Of those who participated in the survey process, 16 attended the face-to-face meeting to discuss QIs with disagreement.

Quality indicator acceptability

The first Delphi survey round resulted in agreement being reached among 18 QIs, leaving 17 ranked with substantial disagreement. These latter QIs were brought forward for discussion and re-rated in the face-to-face meeting.

Appendix A lists each of the original 35 proposed QIs by its final rank order. Mean scores ranged from a high of 8.1 (screening for modifiable risk factors in adults with diabetes) to 2.7 (asthma control). Nineteen QIs were ranked as acceptable, with a final mean score of >7.0.

The final set of 19, ranked by acceptability as a QI within an area of focus, can be found in Table 1. The majority of acceptable QIs were process-oriented performance indicators with a focus on prevention. Ten QIs assessed primary prevention strategies, four examined secondary prevention performance, four were proxy outcomes (two indicating treatment had been given and two indicating clinical targets were met) and one was a patient safety QI.

| Table 1. Ranking and rating of accepted PHC quality indicators by area of focus | |||

| Indicators by area of focus: | Rank | Indicator acceptability | Payment linkage acceptability |

| Mean score (SD) | |||

| Prevention | |||

| Primary prevention | |||

| Childhood immunization (CIHI44)* | 2 | 7.9 (0.9) | 7.5 (1.4) |

| Cervical cancer screening (CIHI50) | 5 | 7.8 (0.9) | 7.3 (2.4) |

| Pneumococcal immunization, 65+ (CIHI42) | 6 | 7.8 (1.2) | 7.3 (1.5) |

| Breast cancer screening (CIHI49) | 11 | 7.6 (1.3) | 7.1 (1.6) |

| Bone density screening (CIHI51) | 12 | 7.5 (1.1) | 7.4 (1.1) |

| Dyslipidemia screening for men (CIHI53) | 13 | 7.4 (1.3) | 7.2 (1.4) |

| Influenza immunization (CIHI41) | 14 | 7.4 (1.8) | 7.3 (1.7) |

| Blood pressure testing (CIHI54) | 16 | 7.3 (1.3) | 7.1 (1.5) |

| Colon cancer screening (CIHI48) | 18 | 7.1 (1.7) | 6.7 (1.9) |

| Dyslipidemia screening for women (CIHI52) | 19 | 7.0 (1.8) | 6.7 (1.6) |

| Secondary prevention | |||

| Screening for modifiable risk factors in adults with diabetes (CIHI57) | 1 | 8.1 (0.8) | 7.6 (1.3) |

| Screening for modifiable risk factors in adults with coronary artery disease (CIHI55) | 3 | 7.9 (1.2) | 7.5 (1.3) |

| Screening for modifiable risk factors in adults with hypertension (CIHI56) | 9 | 7.7 (1.5) | 7.2 (1.7) |

| Screening for visual impairment in adults with diabetes (CIHI58) | 10 | 7.7 (1.5) | 6.8 (1.8) |

| Outcomes | |||

| Treatment of dyslipidema (CIHI61) | 4 | 7.9 (1.5) | 7.5 (1.5) |

| Blood pressure control for hypertension (without diabetes or renal failure) (CIHI40) | 7 | 7.7 (0.9) | 6.2 (1.8) |

| Glycaemic control for diabetes (CIHI39) | 15 | 7.3 (1.1) | 5.1 (2.5) |

| Treatment of congestive heart failure (CIHI60) | 17 | 7.1 (1.5) | 7.1 (1.6) |

| Patient Safety | |||

| Maintaining medication and problem lists in PHC (CIHI70) | 8 | 7.7 (1.5) | 6.8 (2.0) |

| * CIHI #: Indicates the Canadian Institute for Health Information numbered pHC indicator | |||

Coding of the written comments and the face-to-face discussion provided insight into principles that were associated with an acceptable QI. Key principles included a QI being evidence-based, easy to measure, clearly worded, having clearly defined criteria (e.g., specific operational definitions, standardized screening tools, objective values to reach) and the ability to clearly identify the patient population of interest and patient exclusions. There was a favourable sense that QIs acting as a reminder to the provider not to overlook care were better regarded (e.g., a prompt to provide pneumococcal immunization). The primary concerns associated with whether a QI was deemed acceptable seemed to centre on whether it assessed an outcome versus a process. Some providers felt that they could only counsel or advise but did not have the power to control compliance. One example of this situation is the process of advising dietary changes but not being responsible for the final dietary patterns of the individual.

Comments were also made that PHC providers may not have access to tools to help achieve a QI target, such as an electronic medical record that can extract practice-level data. Other factors influencing the acceptability of a QI to our panel included whether the QI was under PHC control (e.g., breastfeeding and its many community and societal influences), the timeliness of evidence supporting the QI, the need for adjustments in QI achievement based on differing practice population characteristics, the impact of co-morbidity burden on QI achievement, and whether the QI focused on the provider's behaviour versus "system" or "organization" capabilities. QIs that focused on system capabilities tended not to be well understood or favoured by the majority of panel members. One system QI seen as challenging was "implementation of PHC clinical quality improvement initiatives." Its definition – "the percentage of PHC organizations who implemented at least one or more changes in clinical practice as a result of quality improvement initiatives over the past 12 months" – was seen to be one that a region or health authority would be rated on rather than an individual practice. This indicator received an average score of 5.4 (SD 2.7).

Payment link acceptability

In the first round of the Delphi survey, agreement was reached for 16 QIs on linking a QI to payment, leaving 19 QIs rated with disagreement to be discussed and re-rated in the second-stage face-to-face meeting.

Table 1 includes the final rating score for linking a QI to payment for each of the top 19 QIs identified as most acceptable indicators of quality. Mean rating scores ranged from a high of 7.6 (screening for modifiable risk factors in adults with diabetes) to a low of 5.1 (glycaemic control for diabetes). The rank order in this table is directed by the score for the acceptability of the QI itself as an indicator of quality of care and not a ranking of acceptability to payment linking. Linking payment to the achievement of a potential QI was of secondary importance in this first phase of the project. At this stage, the acceptability of the QI, as a measure of quality of care itself, was of primary interest. In the third phase of the project payment, link ratings associated with the most acceptable and feasible QIs from the first two phases will be provided greater focus.

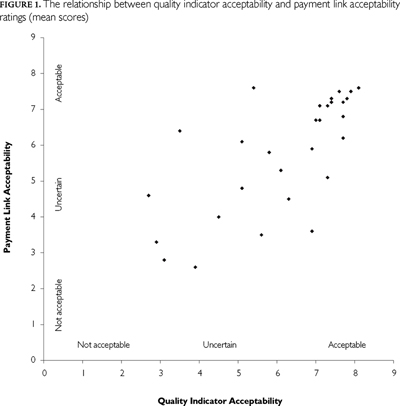

Figure 1 illustrates the relationship between the acceptability ratings for the QI itself and the associated acceptability rating for a payment link of all 35 QIs initially ranked. Although a moderate positive linear relationship can be seen (Pearson correlation coefficient r=0.74), variability is evident. Most QIs rated as acceptable indicators of quality of care (>7.0) tended also to score higher with respect to the acceptability of a payment link. The primary exceptions were associated with QIs assessing such performance outcomes as blood pressure control for hypertension (CIHI 40) and glycaemic control for diabetes (CIHI 39), where the acceptability of a payment link was rated relatively lower than that for the QI itself.

In the analysis of the qualitative comments made by panelists on the surveys and in the face-to-face meeting, a number of concerns were raised with respect to linking payment to the achievement of a QI. Concerns were voiced about whether PHC practitioners should receive additional incentives for what is considered the standard of care. Some panelists did not feel they should be paid more to do what they are already doing, or should be doing. Panelists also expressed the need to be able to adjust the denominator to account for patients who refuse care or those with contraindications. Because all practice populations are not the same, for some, the patient mix would make achieving the indicator more challenging. Thus, having the ability to account for patient mix was thought important. This same point was made in the comments regarding the acceptability of some indicators as valid measures of quality of care (see above).

Panelists felt that striving to achieve QI targets has the potential to interfere with the provider–patient relationship by forcing attention away from patient agendas to only those issues that increased income for the provider.

Similar to the assessment of acceptability for quality of care, some QIs, such as those assessing outcomes and others requiring tests not readily available, were felt to be beyond providers' control.

A number of concerns pertaining to "gaming" were raised. Some felt that financial incentives to achieve QIs could lead some providers to select new patients based on their conditions while also encouraging others with "undesirable" conditions to leave the practice.

Additional questions were raised about the sharing of responsibility for a patient with other providers (i.e., which provider would receive the incentive), management of QI costs, documentation of offer or advice, and achieving percentage of change versus absolute change and group versus individual targets. Overall acceptability of a payment link (and the QI itself) was rejected if the QI was felt to be poorly defined or the wording of the QI implied that treatment required an incentive following diagnosis (e.g., was the patient diagnosed with depression offered treatment).

Discussion

Overall, 19 of the initial 35 QIs were ranked as acceptable measures of quality of care (>7.0). Fourteen of these were associated with prevention strategies (10 primary prevention, four secondary), four were outcomes and one was a patient safety QI. We were encouraged to see the clear link in our panelists' thinking between what they ranked as acceptable QIs and those QIs considered acceptable to link to payment strategies. If a cut-off mean score of 7.0 or greater in the ratings of "acceptability to payment linkage" was also applied, our final QI set would reduce to 13 items. Our study team has, however, retained the 19 for the initial feasibility work to be conducted in phase two of this study. The integration of the payment rating findings will be used in phase three.

The finding of general enthusiasm for QIs, particularly among providers, is not unique to the Canadian setting (Young et al. 2007). However, this enthusiasm predates actual experience with performance measurement strategies, and once these are implemented, concerns tend to follow (Greene and Nash 2008). The types of concerns expressed by our panelists are similar to those found in the literature. Specifically, these concerns include the challenges of creating clear operational definitions, the ability to identify the numerator and denominator from practice records, where the "majority of control" of achieving the QI rested (with provider or patient) and the ability to adjust achievement by patient characteristics. The identification of preference given to QIs based on process activities rather than outcomes has led some proponents to suggest that incentive strategies might best be constructed around a combination of these two types of measures (Lilford et al. 2007). The indicators removed from consideration, if not used, may lead to possible performance incentive strategies that may avoid a number of issues, ranging from medication use for chronic conditions (asthma, myocardial infarction, depression and anxiety), to well child care (breastfeeding, injury prevention, well baby screening), to some practice organization issues (quality improvement initiatives, medication incident reduction). It is important to remember that the reasons for our panel's excluding an indicator may not reflect the perception of the validity of the issue, but rather the views on ability to measure the indicator with any perceived accuracy.

Linking the use of QIs to payment strategies, generally known as pay for performance (P4P), is relatively new in the Canadian setting. Early efforts are underway in British Columbia, Ontario and Nova Scotia, where payment for chronic disease care and some prevention strategies is underway (British Columbia Ministry of Health 2006; OMHLTC 2009). Our findings lend support for potential payment mechanisms. Our participant providers and decision-makers agree that some QIs are acceptable as valid measures and also warrant incentive financial strategies. Following the second phase of our study, which examines the feasibility of obtaining EMR information to populate the 19 indicators deemed most acceptable in this phase one, we will bring together the results of these two phases to fully explore acceptable funding mechanisms for what we believe will likely be an even smaller QI set.

As the use of quality or performance indicators unfolds in PHC in Canada, it will require general acceptance by different stakeholders. In evaluating the technical effectiveness of the quality of PHC using these QIs, the necessary stakeholders will comprise both healthcare providers (family physicians, nurses, nurse practitioners, pharmacists, dietitians and others) and funder decision-makers (such as provincial ministries of health, who pay for the health services provided). It is essential to understand which measures achieve a sense of acceptability to the providers and funders and, more broadly, the principles or characteristics that underlie a measure's inherent acceptability for future work. Patient participation in assessing the acceptable QIs has begun in the United Kingdom but has not been that successful (Murie and Douglas-Scott 2004).

Performance measurement or management – the broader strategy in which the use of QIs is but one component – is a challenging area in healthcare delivery today. Although having varying definitions, it is generally thought to include four stages: (1) conceptualization, (2) selection/development of measures (the QIs), (3) data collection and processing, and (4) the reporting and use of the results (Adair et al. 2006). The intent is to serve two main purposes: to improve quality and to promote accountability (Freeman 2002). Our study has sought only to provide information on what the participants considered an acceptable, manageable set of measures (the QIs) for consideration in a performance management approach in primary care given a rather large set developed by a national organization (CIHI 2006c). Using such indicators in a performance management approach has both intended consequences (improvements in quality of care, outcomes for specific situations or both) and unintended ones (exclusion of some conditions, situations; focus on building better measures and ignoring underlying process; gaming, blaming and lowering morale) (Freeman 2002). The United Kingdom and private healthcare organizations in the United States have been experiencing these issues and are modifying their approaches to minimize them.

Limitations

As with all Delphi processes, it is important to consider the limitations. The participating panelists were purposively chosen to achieve a range of opinions. They may not represent the majority view of all PHC providers and decision-makers. In addition, the work was conducted in Nova Scotia, the context of which finds electronic medical record uptake in the order of 30% of family practices and which has not seen "structured, pre-defined" new models of PHC delivery as in other Canadian provinces (such as family health teams in Ontario, family medicine groups in Quebec or primary care networks in Alberta).

Conclusion

The findings of our study provide important evidence of the acceptability to health providers and funders of a small set of QIs and of their views of linking payment to performance on these QIs. Steps are now underway in phase two of our research to examine the ability to extract data from electronic records in primary care practices. This second phase of our study will report on the feasibility of finding the data to populate the 19 QIs deemed acceptable. Other related efforts are underway across the country in order to move the measurement issues forward. A critical large-scale effort is the Canadian Primary Care Sentinel Surveillance Network (2009), funded by the Public Health Agency of Canada, which is focused on chronic disease surveillance in primary care using electronic medical records. Until we are confident that our measurement of the QIs is achievable, linking pay to performance will be difficult to implement.

| Appendix A. Primary healthcare CIHI quality indicators by rank order in Nova Scotia, with associated ranking for linking the indicator to payment | |||||

| Rank | CIHI # | Indicator | Description | Mean (SD) | |

| Indicator | Payment link | ||||

| 1 | 57 | Screening for modifiable risk factors in adults with diabetes | Percentage of PHC clients/patients, 18 years and over, with diabetes mellitus who received annual testing within the past 12 months for all of the following: Hemoglobin A1c testing (HbA1c); Full fasting lipid profile screening; Nephropathy screening (e.g., albumin/creatinine ratio, microalbuminuria); Blood pressure (BP) measurement; and Obesity/overweight screening. | 8.1 (0.8) | 7.6 (1.3) |

| 2 | 44 | Child immunization | Percentage of PHC clients/patients who received required primary childhood immunizations by 7 years of age. | 7.9 (0.9) | 7.5 (1.4) |

| 3 | 55 | Screening for modifiable risk factors in adults with coronary artery disease (CAD) | Percentage of PHC clients/patients, 18 years and over, with coronary artery disease who received annual testing within the past 12 months for all of the following: Fasting blood sugar; Full fasting lipid profile screening; Blood pressure measurement; and Obesity/overweight screening. | 7.9 (1.2) | 7.5 (1.3) |

| 4 | 61 | Treatment of dyslipidemia | Percentage of PHC clients/patients, 18 years and over, with established CAD and elevated LDL-C (i.e., greater than 2.5 mmol/L) who were offered lifestyle advice and/or lipid-lowering medication. | 7.9 (1.5) | 7.5 (1.5) |

| 5 | 50 | Cervical cancer screening | Percentage of women PHC clients/patients, ages 18 to 69 years, who received papanicolaou smear within the past 3 years. | 7.8 (0.9) | 7.3 (2.4)* |

| 6 | 42 | Pneumococcal immunization, 65+ | Percentage of PHC clients/patients, 65 years and over, who have received a pneumococcal immunization. | 7.8 (1.2) | 7.3 (1.5) |

| 7 | 40 | Blood pressure control for hypertension (without diabetes mellitus or renal failure) | Percentage of PHC clients/patients, 18 years and over, with hypertension (without diabetes mellitus or renal failure) for duration of at least 1 year, who have blood pressure measurement control (i.e., less than 140/90 mmHg). | 7.7 (0.9)* | 6.2 (1.8)* |

| 8 | 70 | Maintaining medication and problem lists in PHC | Percentage of PHC organizations with a process in place to ensure that a current medication and problem list is recorded in the PHC client/patient's health record. | 7.7 (1.5) | 6.8 (2.0)* |

| 9 | 56 | Screening for modifiable risk factors in adults with hypertension | Percentage of PHC clients/patients, 18 years and over, with hypertension who received annual testing within the past 12 months for all of the following: Fasting blood sugar; Full fasting lipid profile screening; Test to detect renal dysfunction (e.g., serum creatinine); Blood pressure measurement; and Obesity/overweight screening. | 7.7 (1.5) | 7.2 (1.7) |

| 10 | 58 | Screening for visual impairment1 in adults with diabetes | Percentage of PHC clients/patients, 18 to 75 years, with diabetes mellitus who saw an optometrist or ophthalmologist within the past 24 months. | 7.7 (1.5)* | 6.8 (1.8)* |

| 11 | 49 | Breast cancer screening | Percentage of women PHC clients/patients, ages 50 to 69 years, who received mammography and clinical breast exam within the past 24 months. | 7.6 (1.3) | 7.1 (1.6) |

| 12 | 51 | Bone density screening | Percentage of women PHC clients/patients, 65 years and older, who received screening for low bone mineral density at least once. | 7.5 (1.1) | 7.4 (1.1) |

| 13 | 53 | Dyslipidemia screening for men | Percentage of male PHC clients/patients, 40 years and over, who had a full fasting lipid profile measured within the past 24 months. | 7.4 (1.3) | 7.2 (1.4) |

| 14 | 41 | Influenza immunization, 65+ | Percentage of PHC clients/patients, 65 years and over, who received an influenza immunization within the past 12 months. | 7.4 (1.8) | 7.3 (1.7) |

| 15 | 39 | Glycaemic control for diabetes | Percentage of PHC clients/patients, 18 years and over, with diabetes mellitus in whom the last HbA1c was 7.0% or less (or equivalent test/reference range, depending on local laboratory) in the last 15 months. | 7.3 (1.1)* | 5.1 (2.5)* |

| 16 | 54 | Blood pressure testing | Percentage of PHC clients/patients, 18 years and over, who had their blood pressure measured within the past 24 months. | 7.3 (1.3) | 7.1 (1.5) |

| 17 | 60 | Treatment of congestive heart failure (CHF) | Percentage of PHC clients/patients, 18 years and over, with CHF who are using ACE inhibitors or ARBs. | 7.1 (1.5) | 7.1 (1.6) |

| 18 | 48 | Colon cancer screening | Percentage of PHC clients/patients, 50 years and over, who received screening for colon cancer with haemoccult test within the past 24 months. | 7.1 (1.7) | 6.7 (1.9) |

| 19 | 52 | Dyslipidemia screening for women | Percentage of female PHC clients/patients, 55 years and over, who had a full fasting lipid profile measured within the past 24 months. | 7.0 (1.8) | 6.7 (1.6) |

| 20 | 38 | Emergency department visits for congestive heart failure (CHF) | Percentage of PHC clients/patients, ages 20 to 75 years, with congestive heart failure who visited the emergency department for congestive heart failure in the past 12 months. | 6.9 (1.4)* | 3.6 (2.5)* |

| 21 | 68 | Use of medication alerts in PHC | Percentage of PHC organizations who currently use an electronic prescribing/drug ordering system that includes client/patient specific medication alerts. | 6.9 (1.7) | 5.9 (2.7)* |

| 22 | 45 | Breastfeeding education | Percentage of women PHC clients/patients who had a live birth and received counselling on breastfeeding, education programs and post-partum support to promote breastfeeding. | 6.3 (1.9) * | 4.5 (2.8)* |

| 23 | 67 | PHC support for medication incident reduction | Percentage of PHC providers whose PHC organization has processes and structures in place to support a non-punitive approach to medication incident reduction. | 6.1 (1.5) | 5.3 (2.9)* |

| 24 | 46 | Depression screening for pregnant and post-partum women | Percentage of women PHC clients/patients who are pregnant or post-partum who have been screened for depression. | 5.8 (1.8)* | 5.8 (1.8) |

| 25 | 37 | Emergency department visits for asthma | Percentage of PHC clients/patients, ages 6 to 55 years, with asthma who visited the emergency department for asthma in the past 12 months. | 5.6 (1.8)* | 3.5 (2.1)* |

| 26 | 69 | Implementation of PHC clinical quality improvement initiatives | Percentage of PHC organizations who implemented at least one or more changes in clinical practice as a result of quality improvement initiatives over the past 12 months. | 5.4 (2.7)* | 7.6 (1.3)* |

| 27 | 72 | Professional development for PHC providers and support staff | Percentage of PHC providers and support staff whose PHC organization provided them with support* to participate in continuing professional development within the past 12 months, by type of PHC provider and support staff. | 5.1 (2.7)* | 6.1 (2.8)* |

| 28 | 47 | Counselling on home risk factors for children | Percentage of PHC clients/patients with children under 2 years who were given information on child injury prevention in the home. | 5.1 (3.1)* | 4.8 (3.1)* |

| 29 | 62 | Treatment of acute myocardial infarction (AMI) | Percentage of PHC clients/patients who have had an AMI and are currently prescribed a beta-blocking drug. | 4.5 (2.1)* | 4.1 (2.7)* |

| 30 | 63 | Antidepressant medication monitoring | Percentage of PHC clients/patients with depression who are taking antidepressant drug treatment under the supervision of a PHC provider, and who had follow-up contact by a PHC provider for review within two weeks of initiating antidepressant drug treatment. | 4.5 (2.1)* | 4.0 (2.7)* |

| 31 | 64 | Treatment of depression | Percentage of PHC clients/patients, 18 years and over, with depression who were offered treatment (pharmacological and/or non-pharmacological) or referral to a mental healthcare provider. | 3.9 (3.0)* | 2.6 (1.9)* |

| 32 | 43 | Well baby screening | Percentage of PHC clients/patients who received screenings for congenital hip displacement, eye and hearing problems by 3 years of age. | 3.5 (1.9)* | 6.4 (1.7) |

| 33 | 65 | Treatment of anxiety | Percentage of PHC clients/patients, 18 years and over, with panic disorder or generalized anxiety disorder who are offered treatment (pharmacological and/or non-pharmacological) or referral to a mental healthcare provider. | 3.1 (2.2)* | 2.8 (2.5)* |

| 34 | 66 | Treatment for illicit or prescription drug use problems | Percentage of PHC clients/patients with prescription or illicit drug use problems who were offered, provided or directed to treatment by the PHC provider. | 2.9 (2.0)* | 3.3 (2.2)* |

| 35 | 59 | Asthma control | Percentage of PHC clients/patients, ages 6 to 55 years, with asthma, who were dispensed high amounts (greater than 4 canisters) of short-acting beta2-agonist (SABA) within the past 12 months and who received a prescription for preventer/controller medication (e.g., inhaled corticosteroid – ICS). | 2.7 (1.9)* | 4.6 (2.7)* |

| 1 Group recommended "visual impairment" be replaced by "diabetic retinopathy." | |||||

| * Re-ranked value | |||||

Évaluation de l'acceptabilité des indicateurs de qualité et de la mise en lien des paiements pour les soins de santé primaires en Nouvelle-Écosse

Résumé

En 2006, l'Institut canadien d'information sur la santé (ICIS) publiait un ensemble complet d'indicateurs de qualité (IQ) en matière de soins de santé primaires (SSP). Nous avons étudié l'acceptabilité d'un sous-ensemble de ces indicateurs comme mesures de la qualité technique des soins ainsi que le lien potentiel avec les outils d'incitation au paiement. Nous avons employé une méthode Delphi modifiée, fondée sur la méthode de consensus RAND, auprès d'un panel de spécialistes composés de professionnels des SSP (médecins de famille, infirmières et infirmières praticiennes) et de décideurs qui n'avaient pas d'expérience préalable en matière de «rémunération au rendement.» Une échelle de Likert en neuf points a été utilisée pour classer, d'une part, l'acceptabilité de 35 IQ de l'ICIS dans le milieu de la pratique et, d'autre part, l'acceptabilité d'un mécanisme de paiement associé à chacun d'eux. Les IQ classés «en désaccord» ont été discutés et reclassés lors d'une réunion en face-à-face. Le panel a classé 19 IQ dans la catégorie «acceptable.» Les incitatifs au paiement associés à ces IQ ont été jugés acceptables pour 13 d'entre eux. Plusieurs facteurs communs ont émergé pour les IQ moins attrayants au regard de la mise en lien avec les paiements.

About the Author(s)

Fred Burge, MSc, MD, Professor, Department of Family Medicine, Dalhousie University, Halifax, NS

Beverley Lawson, MSc, Research Associate and Lecturer, Department of Family Medicine, Dalhousie University, Halifax, Nova Scotia

Wayne Putnam, MD, Associate Professor, Department of Family Medicine, Dalhousie University, Halifax, Nova Scotia

Correspondence may be addressed to: Dr. Fred Burge, Professor and Research Director, Dalhousie Family Medicine, AJLB 8 QEII HSC, 5909 Veteran's Memorial Lane, Halifax, NS B3H 2E2; tel.: 902-473-4742; fax: 902-473-4760; e-mail: fred.burge@dal.ca.

Acknowledgment

This work was supported by Canadian Institutes of Health Research (CIHR) grant FRN: MOP-86540. The authors are grateful for the contributions of our research team members and all of the DELPHI panelists.

References

Adair, C., E. Simpson and A. Casebeer. 2006. "Performance Measurement in Healthcare: Part II – State of the Science Findings by Stage of the Performance Measurement Process." Healthcare Policy 2(1): 56–78.

Barnsley, J., W. Berta, R. Cockerill, J. Macphail and E. Vayda. 2005. "Identifying Performance Indicators for Family Practice: Assessing Levels of Consensus." Canadian Family Physician 51: 700–8.

British Columbia Ministry of Health. 2006. Full Service Family Practice Incentive Program. Retrieved April 1, 2011. <http://www.primaryhealthcarebc.ca/phc/gpsc_incentive.html>.

Campbell, S.M., J. Braspenning, A. Hutchinson and M. Marshall. 2002. "Research Methods Used in Developing and Applying Quality Indicators in Primary Care." Quality and Safety in Health Care 11: 358–64.

Campbell, S. and J. Hacker. 2002. "Developing the Quality Indicator Set." In M. Marshall, S. Campbell, J. Hacker and M. Roland, eds., Quality Indicators for General Practice: A Practical Guide for Health Professionals and Managers (pp. 7–14). London: The Royal Society of Medicine Press.

Canadian Institute for Health Information (CIHI). 2005. The Health Indicators Project: The Next 5 Years. Ottawa. Retrieved April 28, 2011. <http://secure.cihi.ca/cihiweb/products/consensus_conference_e.pdf>.

Canadian Institute for Health Information (CIHI). 2006a. Report 2: Enhancing the Primary Health Care Data Collection Infrastructure in Canada. Retrieved April 1, 2011. <http://www.cihi.ca/CIHI-ext-portal/internet/EN/TabbedContent/types+of+care/primary+health/cihi006583>.

Canadian Institute for Health Information (CIHI). 2006b. Enhancing the Primary Health Care Data Collection Infrastructure in Canada Report 2: Pan-Canadian Primary Health Care Indicator Development Project. Retrieved April 1, 2011. <http://www.cihi.ca/CIHI-ext-portal/internet/EN/TabbedContent/types+of+care/primary+health/cihi006583>.

Canadian Institute for Health Information (CIHI). 2006c. Pan-Canadian Primary Health Care Indicators Report 1, Volume 1: Pan-Canadian Primary Health Care Indicator Development Project. Retrieved April 1, 2011. <http://www.cihi.ca/CIHI-ext-portal/internet/EN/TabbedContent/types+of+care/primary+health/cihi006583>.

Canadian Primary Care Sentinel Surveillance Network. 2009. Retrieved April 1, 2011. <http://www.cpcssn.ca/cpcssn/home-e.asp>.

Committee on Redesigning Health Insurance Performance Measures, Payment, and Performance Improvement Programs. 2006. Performance Measurement: Accelerating Improvement. Washington, DC: National Academies Press.

Engels, Y., M. Dautzenberg, S. Campbell, B. Broge, N. Boffin, M. Marshall et al. 2005. "Testing a European Set of Indicators for the Evaluation of the Management of Primary Care Practices." Family Practice 22: 215–22.

Freeman, T. 2002. "Using Performance Indicators to Improve Health Care Quality in the Public Sector: A Review of the Literature." Health Services Management Research 15(2): 126–37.

Greene, S.E. and D.B. Nash. 2008. "Pay for Performance: An Overview of the Literature." American Journal of Medical Quality 24: 140–63.

Lester, H., D.J. Sharp, F.D.R. Hobbs and M. Lakhani. 2006. "The Quality and Outcomes Framework of the GMS Contract: A Quiet Evolution for 2006." British Journal of General Practice 56: 244–46.

Lilford, R.J., C.A. Brown and J. Nicholl. 2007. "Use of Process Measures to Monitor the Quality of Clinical Practice." British Medical Journal 335: 648–50.

Marshall, M.N., M.O. Roland, S.M. Campbell, S. Kirk, D. Reeves, R. Brook et al. 2003. Measuring General Practice: A Demonstration Project to Develop and Test a Set of Primary Care Clinical Quality Indicators. London, UK: The Nuffield Trust.

McGlynn, E.A., S.M. Asch, J. Adams, J. Keesey, J. Hicks, A. DeCristofaro et al. 2003. "The Quality of Health Care Delivered to Adults in the United States." New England Journal of Medicine 348: 2635–45.

Murie, J. and G. Douglas-Scott. 2004. "Developing an Evidence Base for Patient and Public Involvement." Clinical Governance: An International Journal 9: 147–54.

Normand, S-T., B.J. McNeil, L.E. Peterson and R.H. Palmer. 1998. "Methodology Matters – VIII: Eliciting Expert Opinion Using the Delphi Technique: Identifying Performance Indicators for Cardiovascular Disease." International Journal of Quality in Health Care 10: 247–60.

Ontario Ministry of Health and Long-Term Care (OMHLTC). 2009 (September). Guide to Physician Compensation. Retrieved April 1, 2011. <http://www.health.gov.on.ca/transformation/fht/guides/fht_compensation.pdf>.

Roland, M. 2004. "Linking Physicians' Pay to the Quality of Care – A Major Experiment in the United Kingdom." New England Journal of Medicine 351: 1448–54.

Roland, M. 2007. "The Quality and Outcomes Framework: Too Early for a Final Verdict." Editorial. British Journal of General Practice 57: 525–27.

Young, G.J., M. Meterko, B. White, B.G. Bokhour, K.M. Sautter, D. Berlowitz et al. 2007. "Physician Attitudes toward Pay-for-Quality Programs." Medical Care Research and Review 64: 331–43.

Comments

Be the first to comment on this!

Personal Subscriber? Sign In

Note: Please enter a display name. Your email address will not be publically displayed