Healthcare Quarterly

The Crucial Role of Clinician Engagement in System-Wide Quality Improvement: The Cancer Care Ontario Experience

Abstract

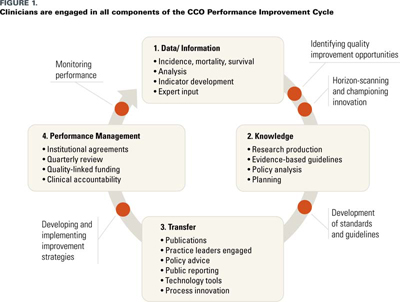

In 2004, Cancer Care Ontario's (CCO) role changed from providing direct cancer service to oversight, with a mission to improve the performance of the cancer system by driving quality, accountability and innovation in all cancer-related services. Since then, CCO has built a model for province-wide quality improvement and oversight – the Performance Improvement Cycle – that exemplifies the key elements of the Excellent Care for All Act, 2010. While ensuring that quality of the cancer system is by necessity a continuous process, the approach taken thus far has achieved measurable results and will continue to form the basis of CCO's future work.

Clinician engagement has been critical to the success of CCO's approach to quality oversight and improvement. CCO uses a variety of formal and informal clinical engagement structures at each step of the Performance Improvement Cycle, and has developed operational processes to support quality improvement, and educational and mentorship programs to build clinician leadership capacity in that area. An example of sustained quality improvement in system performance is illustrated in a case study of the surgical treatment of prostate cancer. The improvement was achieved with strong collaboration across CCO's surgery and pathology clinical programs, with support from informatics staff.

In 2004, Cancer Care Ontario (CCO), the provincial agency responsible for cancer services in Ontario, changed its role from that of direct cancer service provision to one of oversight. Its mission became to improve the performance of the cancer system by driving quality, accountability and innovation in all cancer-related services. The obvious challenge was the method by which this mandate could be accomplished in the absence of direct operational authority. Since then, CCO has built a model for province-wide quality improvement and oversight that exemplifies the key elements of the Excellent Care for All Act, 2010 (ECFA Act) (Duvalko et al. 2009). The CCO Performance Improvement Cycle (Figure 1) is based on routine monitoring and public reporting of performance data, developing and disseminating evidence-based best practice guidance, setting annual quality improvement targets, purchasing cancer services (from hospitals organized into regional cancer programs with dedicated cancer leadership) that enable the achievement of quality as well as volume targets, and making provider teams accountable for achievement of these targets. The annual performance of regional cancer leaders is judged on the degree to which agreed-upon volume and quality targets have been met.

The Performance Improvement Cycle has been successful in addressing some of the pressing issues in cancer quality that existed in 2004. For example, access to radiation treatment and cancer surgery has improved considerably, multidisciplinary case conferences occur regularly, high-complexity cancer services have been consolidated in accordance with evidence-based organizational standards, and patients have the ability to report their symptoms in a standardized manner at each clinical intervention, promoting earlier recognition and intervention (Cancer Quality Council of Ontario [CQCO] 2011). While ensuring that quality of the cancer system is by necessity a continuous process, the approach taken thus far has achieved measurable results and will continue to form the basis of CCO's future work.

Clinician engagement has been critical in the success of CCO's approach to quality oversight and improvement (Dobrow et al. 2008).This paper will describe the deliberate manner in which CCO engages and empowers clinicians in this shared quality improvement agenda, provide a case study of a successful engagement strategy, and provide policy recommendations to bridge the traditional gap between administrative and clinical leadership.

Clinician Engagement throughout the Performance Improvement Cycle

CCO uses a variety of formal and informal clinical engagement structures at each step of its Performance Improvement Cycle. Communities of Practice (CoPs) are informal groups of clinician providers that identify quality gaps and have a common goal of solving quality problems. Expert panels are formed on a time-limited basis to address specific topics (such as development of quality indicators), incorporating best evidence supplemented by consensus. In collaboration with the Program in Evidence-Based Care, multidisciplinary teams develop evidence-based best practice guidance documents, including clinical practice guidelines and organizational standards. Feedback from a larger group of practitioners is incorporated into final documents. The formal clinical engagement structure is centred around clinical leadership for each of the CCO's programmatic areas of focus. Provincial and regional clinical leads form provincial clinical program committees that set the quality agenda. Regional clinical leads are accountable for bringing local perspective to inform the quality agenda and for serving as explicit champions for regional implementation. Regional clinical and administrative leads are jointly accountable, through their regional vice presidents, for regional performance and participate in quarterly performance reviews with CCO. Provincial clinical leads are responsible for provincial program oversight and for knowledge exchange with regional leads to ensure that they are positioned to succeed in their regional commitments.

Operational Processes

CCO has developed a Clinical Accountability Framework that explicitly defines the roles and responsibilities of the provincial and regional clinical leads. It stipulates clear lines of accountability and specifically the relationship between the clinical and administrative leads that generates a model of integrated clinical accountability. The framework has been the foundation for the development of role statements, recruitment processes, annual setting of objectives and performance review. We also developed remuneration guidelines sufficient to free up time from clinical practice. We provide infrastructure support to ensure that clinical leads, a relatively costly resource, are utilized only for appropriate functions. As a direct result of implementing the framework, the quality agenda is formed, executed and evaluated by clinical leaders with clear accountabilities that include formal engagement of a representative group of clinicians from across the province.

Building Leadership Capacity in Quality Improvement

Since clinicians are not routinely trained in quality improvement methodology or in leadership techniques, we recognized our responsibility to build leadership capacity in quality improvement. We hold an annual educational event that has used a graduated curriculum to build leadership skills and incorporate regional successes as an explicit way to exchange best practice in implementation. For instance, one such event introduced the Institute for Healthcare Improvement's clinician engagement framework, provided a workshop format to allow application of the framework to actual local examples and included presentation of "what worked, what did not" from some of the more experienced clinical leaders. Provincial leads incorporate practical advice designed to enhance leadership capacity of regional leads in their regular program meetings. In addition, provincial leads conduct regular site visits and coach and mentor regional leads. Importantly, leadership development is aligned with regional and provincial improvement goals.

Engaging Front-Line Providers in a Quality Improvement Agenda

The success of any quality improvement initiative requires not only front-line clinicians' acknowledgement of a quality gap but also their involvement in specific quality improvement efforts. We have relied heavily on regular provision of performance data both internally and publicly to drive quality improvement. We contrast current performance with evidence-based best practice and where possible give data on top performance within Ontario and beyond to illustrate the improvement potential.

While these data are most commonly provided in aggregate at the hospital or regional level, we are increasingly providing clinicians with their individual performance data. Clinicians are strongly motivated by a desire to provide best care, and they respond well to this technique. On occasion we have used academic detailing. We also host educational events to highlight best practice and, in a limited way to date, have made individual practice audits a prerequisite for registration in these events.

For complex quality gaps that involve clinicians, hospital operations and information technology solutions, provision of current performance data and best practice guidance, while necessary, is insufficient for change. We have therefore developed capacity to help regions implement best practice using a variety of techniques ranging from coaching to active implementation teams. Synoptic pathology reporting is one example. Pathologists identified that standardized reporting checklists would ensure that pathology reports included all important information, and that this would improve the efficiency of clinicians in their assignment of prognosis and treatment decisions. They further identified that an electronic tool would facilitate uptake. The province-wide implementation of electronic pathology reports in a standardized format (synoptic reports with evidence-based content and data standards) required a complex partnership of clinicians, information technology and administrative professionals.

We also link best practice advice to funding recommendations and delivery models where appropriate. These recommendations are made by clinician experts, based on best evidence. For example, cancer drugs, PET/CT scans, and thoracic and hepatobiliary surgery are all reimbursed only when done according to eligibility criteria or in accordance with organizational standards.

Case Study: Quality of Prostatectomy Surgery

A tangible example of sustained quality improvement in system performance has been realized in the surgical treatment of prostate cancer. This required a strong collaboration across CCO's surgery and pathology clinical programs, with support from informatics staff.

First, we formed a multidisciplinary Urology Community of Practice. While many issues regarding multidisciplinary care were raised at the initial meeting, one area of concern was the high rate of positive margins after prostatectomy surgery. During such surgery, the surgeon's goal is to remove all of the cancer, along with the rim of normal tissue around it (the "surgical margin"). The pathologist examines the removed tissue and analyzes the surgical margin to be sure it is clear of any cancer cells. Positive surgical margins are associated with higher rates of cancer recurrence and with an increased need for other treatments (e.g., radiation therapy), which results in increased side effects to the patient and increased resource utilization for the cancer system. A manual audit of radical prostatectomy pathology reports from 2005/06 confirmed positive margin rates of 31% and 61% for pathological stage T2(pT2) and T3 prostate cancers, respectively. The rates seemed inordinately high, especially the pT2 rates, and there was significant inter-hospital variability. The CoP identified several potential contributing factors: (1) variable patient selection for radical prostatectomy; (2) pathologists' variable interpretation of a "positive margin"; and (3) variability among surgeons with respect to specific technical aspects of the surgery.

The CoP believed that optimization of pathology and surgical techniques could improve the positive margin rate. The critical success factors in the improvement strategy included (1) The CoP, since it possessed the clinical expertise, developed the engagement strategy. CCO's role was to provide support. (2) An evidence-based clinical practice guideline, Guideline for Optimization of Surgical and Pathological Quality Performance in Radical Prostatectomy in Prostate Cancer Management (available at https://www.cancercare.on.ca) was developed. (3) The CoP recommended a best practice target of <25% margin positivity for pT2 prostate cancer. The CoP agreed that achieving a 0% positive margin rate was not attainable nor, in fact, desirable, based on the fact that quality-of-life issues (impotence, incontinence) could be unnecessarily sacrificed in the name of optimizing margin performance but with potentially no change in survival outcomes. (4) Performance data were shared anonymously in a non-punitive environment with the philosophy of performance improvement and were included at an aggregate level in the publicly available quality report for cancer, the Cancer System Quality Index. (5) Prostate cancer "champions" consisting of local and regional pathology, surgery and radiation oncology leaders became the disciples for practice change locally.

Local events aimed at quality improvement were able to provide effective knowledge transfer. These events were facilitated by low cost support from CCO, supported by provincial clinical leads (surgery and pathology) and led locally by regional heads of cancer surgery and pathology with local prostate cancer champions. Using recognized provincial leaders, best practices on pathology specimen handling and interpretation, and surgical technique were shared with the philosophy that "quality improvement occurs locally." This approach has resulted in a measureable drop in the provincial pT2 margin positivity rate to 21%, with some regions and individual hospitals showing rates of less than 20%. There is still, however, some significant variation. Further performance improvement will be based on ongoing non-punitive sharing of performance data at the provider level to leverage clinicians' desire to deliver high-quality care and their anticipated efforts to improve performance where they are below the performance of their peers. In addition to individual accountability for quality improvement, regional clinical leads continue to be accountable for regional performance and report on progress in quarterly reviews with their administrative leaders and CCO leadership.

This general approach is used to drive quality improvements in all the quality indicators described in the Cancer System Quality Index. Each indicator has a "business owner," usually a provincial clinical program, charged with working with clinicians and relevant stakeholders to identify the source of the quality gap, then develop and implement a program of work with progressive improvement targets attached. Expectations are embedded in annual contacts with hospitals and regions, and progress is tracked in quarterly reviews.

Policy Recommendations

Successful ECFA Act implementation will require significant clinician engagement. Our policy recommendations are based on CCO's experience to date and our desired directions to deepen clinician engagement.

- Clinicians should be provided with their own performance data for quality improvement.

- Formal networks of clinicians with defined roles and responsibilities will facilitate greater accountability for performance improvement and quality.

- Clinician remuneration should be linked to quality expectations in a transparent system developed jointly by clinicians and payers.

- Clinicians should be formally affiliated with care systems (hospitals, community care, etc.) to facilitate integrated accountability and to foster the development of novel accountability structures where all parties bear risk and share rewards.

About the Author(s)

Carol Sawka, MD, FRCPC, is vice president of clinical programs and quality initiatives, Cancer Care Ontario; co-chair of the CCO Clinical Council; and professor of medicine, health policy, management and evaluation and public health sciences, University of Toronto. She is a medical oncologist who practised in the area of breast cancer for many years at Sunnybrook's Odette Cancer Centre.

John Srigley, MD, FRCPC, is Cancer Care Ontario's provincial clinical lead for the Pathology and Laboratory Medicine Program; and professor, Department of Pathology and Molecular Medicine, McMaster University. He is a practising pathologist at The Credit Valley Hospital and Trillium Health Centre, with special interests in oncological pathology and quality assurance.

Jillian Ross, MBA, RN, is director, Clinical Programs, Strategy and Integration and directs the Clinical Council Secretariat and Clinician Engagement Centre of Practice at Cancer Care Ontario.

Jonathan Irish, MD, FRCSC, is Cancer Care Ontario's provincial clinical lead for the Surgical Oncology Program; and Professor, Department of Surgery, University of Toronto. He is an otolaryngologist at University Health Network.

References

Cancer Quality Council of Ontario. 2011. "Annual Cancer System Quality Index (CSQI) 2011." Retrieved Oct. 1, 2012. <www.csqi.on.ca>.

Dobrow, M.J., T. Sullivan and C. Sawka. 2008. "Shifting Clinical Accountability and the Pursuit of Quality: Aligning Clinical and Administrative Approaches." Healthcare Management Forum 21(3): 6–19.

Duvalko, K.M., M. Sherar and C. Sawka. 2009. "Creating a System for Performance Improvement in Cancer Care: Cancer Care Ontario's Clinical Governance Framework." Cancer Control Journal 16(4): 293–302.

Comments

Be the first to comment on this!

Personal Subscriber? Sign In

Note: Please enter a display name. Your email address will not be publically displayed