Nursing Leadership

Abstract

In June 2002, the Canadian Nurses Association (CNA) released a revised study projecting a shortage of 78,000 nurses in Canada by 2011 (Ryten 2002). This projected nursing shortage, along with the increasing average age of nurses, led The Ottawa Hospital to actively plan and strategize the sourcing of Registered Nurse (RN) positions. Annual workforce planning is followed by the development of RN sourcing strategies in collaboration with human resources and nursing. Our challenge was to provide an evidence-based evaluation of our annual sourcing strategy.

Evaluation Model Selection

The changing culture of public administration invites accountability for results and outcomes (Mayne 2001). The intention of evaluation is to help decision-makers make wise decisions (Weiss 1988). The purpose of evaluation is to provide evidence of successes and shortcomings, measuring our progress towards the sought-after results and our flexibility to adjust operations (Weiss 1988). Program evaluation is an essential tool for the management of any type of program, linking daily activities to specific goals and objectives. Internal evaluation has grown in popularity in recent years due to disenchantment with external evaluators, funding cuts and concern with the poor utilization of evaluations (Love 1991). Internal evaluation is a form of action research that supports organizational development and planned change (Love 1991). The internal evaluator frequently is responsible for not only analyzing the problems and making recommendations, but also for correcting difficulties and implementing solutions (Sonnichesen 1988). An evaluation plan serves as a guiding document. It outlines how the evaluation will be carried out, who the stakeholders are, what will be measured and how, what the timelines and specific long- and short-term objectives are. Participatory evaluation is one branch of the evaluation literature, which implies collaborative work, with the individuals, groups or communities having a decided stake in the program development (Cousins and Whitmore 1998). This form of evaluation matched our vision.

A review of the program evaluation literature led us to consider using a program logic model (a participatory model) to guide the evaluation and planning process for our Annual RN Sourcing Strategy. Program logic models have been important tools for program planning, management and evaluation, describing the sequence of events for bringing about change and relating activities to outcomes since the late 1980s (Bickman 1987). The process of developing a logic model is often as valuable to program teams as the program logic model itself and can be used in any type and size of program. It seemed the best fit for what we were trying to accomplish.

The Program Logic Model

Program theory guides evaluations by identifying key program elements and articulating how they are expected to relate to each other (Cooksy et al. 2004). Theory-driven evaluations are more likely to discover the effects of the program on the grounds that it identifies and examines a larger set of potential program outcomes (Chen and Rossi 1980). Weiss (1972) recommended the use of path diagrams to model the sequence of events between a program's intervention and the desired outcomes. A program logic model is a systematic, visual way to present a planned program with its underlying assumptions and theoretical framework (W.K. Kellog Foundation 1998, 2001). A program logic model may be generally defined as flow charts that display a sequence of logical steps in program implementation and achievement of desired outcomes and offer a unique venue to communicate the relations of program resources to the outcomes in a simple picture (Cooksy et al. 2004). A program logic model provides a clear description of the program (a blueprint), ties the program purpose to decision-making, promotes the systematic gathering, analysis and reporting of both quantitative and qualitative data, promotes stakeholder involvement and the use of evaluation findings (Porteous et al. 1999, 2002). Within the model, a program is broadly defined as a set of activities, supported by a group of resources, designed for particular groups and aimed at achieving specific outcomes (Porteous et al. 1999).

The benefits of developing and using a program logic model can be seen during the different phases of the program: the design and planning/development phase, the program implementation phase and the program evaluation and strategic reporting phase. The benefits include a strengthened case for program investment, the development of a simple image of how and why the program works, an opportunity to reflect on the group process and to see the change over time (Porteous et al. 1999, 2002; W.K. Kellog Foundation 1998, 2001).

The strategy is clarified during the design and planning/development phase, bridging the gap between strategic and operational planning. Gaps are identified in the theory or logic of the program, while building a shared understanding of the program by the stakeholders and how the components will work together. This phase may lead to consideration of different or innovative ways to develop the program. Outcomes are identified and timelines established. The difference between the activities and the intended outcomes of the program will become clearer, and critical questions for evaluation will be identified (Porteous et al.1999; W.K. Kellog Foundation 1998, 2001).

In the implementation phase, the logic model supports the development of the program management plan. The logic model demonstrates clearly the cause and effect relationship between the activities and the results with accountability, and the development of program performance measures for the ongoing monitoring of the program. It allows for program adjustments and provides a venue for taking an inventory of assets and resources needed for the program. The completed logic model demonstrates the theory behind the activities, providing a one-page summary of key components that is easy to share with others (Porteous et al. 1999, 2002; W.K. Kellog Foundation 1998, 2001).

Program logic models are very useful during the program evaluation and strategic reporting phase, providing documentation of accomplishments, a method of organizing the data, preparing reports and defining the variance between the planned and actual outcomes (W.K. Kellog Foundation 1998, 2001).

Limitations of program logic models include the lack of accountability for unintended consequences, and the focus on a single use for the program. The model does not account for the effects of feedback or conflict, or the issues of control and participation within the program. The assumptions are omnipotent - because we have a plan, we will succeed in implementing it (Cooksy et al. 2004).

Program logic models can be used for planning programs and communicating them to stakeholders and within the organization. Logic models can be used for orientation and training of new staff. The models are also excellent tools for ongoing program monitoring and evaluation and can be used for grant applications. Key audiences include program managers, staff, partners, stakeholders, senior management, policy-makers, other organizations and funders, to name a few. Other benefits of program logic models include a strengthened case for program investment; they provide a simple image of how and why a program works. Logic models reflect the group process that led to the development of the model and provide a tool for monitoring change over time (Porteous et al. 1999, 2002; W.K. Kellog Foundation 1998, 2001).

Porteous et al. (1999) have developed tools to aid in the development of a program logic model. They include CAT and SOLO worksheets. It is suggested to start with whichever worksheet is easiest. For example, if the program is new, it may be best to start with the outcomes (the SOLO worksheet), as they are more easily defined.

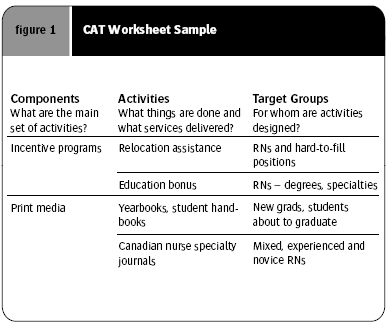

The CAT worksheet includes the Components, Activities and the Target groups. The components are the groups of closely related activities. The activities are the things the program does to work toward desired outcomes. Figure 1 is an example of a CAT worksheet for our RN Sourcing Strategy.

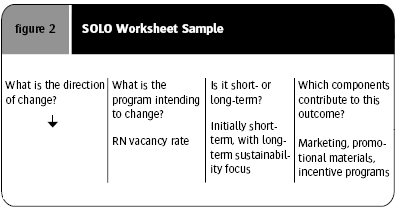

The SOLO worksheet concerns the short- and long-term objectives. The short-term outcomes are the direct result of the program, demonstrating why the program activities will lead to long-term outcomes. The program will be held accountable for the short-term outcomes. The long-term outcomes are the ultimate goals of the program and are expressed as a change in practice, behaviour or condition. Long-term outcomes are more difficult and costly to evaluate but are assumed likely to be achieved if short-term outcomes are achieved (Porteous et al. 1999). Figure 2 is an example of a SOLO worksheet for our RN Sourcing Strategy. Program logic models help create the framework for the evaluation by identifying the questions for each of the components. The model is useful as it is focused on the questions that produce the answers that are of real value to those involved in the program and the stakeholder group (W.K. Kellog Foundation 1998, 2001). The evaluation questions can be divided into two domains: formative and summative. Formative questions are designed to improve the program, focusing on the program activities and short-term outcomes for the purpose of monitoring the progress of the program and making mid-course corrections as needed. Summative questions are used to generate information to demonstrate the results and are focused on the short-term outcomes and their impact. The purpose of the data collection is to determine the value based on results (W.K. Kellog 1998, 2001).

Our Experiences

The Ottawa Hospital wanted to provide an evidence-based evaluation of our annual nursing recruitment strategy to provide valuable data and information to our stakeholders and help us make decisions for the next year's strategy development. After reviewing the program evaluation literature, we chose to use a program logic model and the evaluation process developed by Porteous et al. (1999). We examined the purpose of our evaluation and determined that it was to provide us with information of the successes and weaknesses in our strategy including both qualitative and quantitative data.

In developing the program logic model there are several questions to consider (W.K. Kellog Foundation 1998, 2001): What issues does the program address?

What needs led to addressing the issues? What are the desired results? What influential factors could influence the change? Why do you believe the program will work? Why will your program's approach be effective? In answering these initial questions to define our RN Sourcing Strategy, we were forced to examine the components of the program and the activities we were using to work toward our desired outcomes and what resources we would need, giving us new insight into what we were looking to accomplish. It gave us a clearer picture of what data, both qualitative and quantitative we should be capturing and how we could use it in our annual evaluation. We had always consulted with our stakeholder groups, but by using the program logic model, the stakeholders had more input and ownership of the strategy. We initially held brainstorming sessions with a small group of stakeholders. The initial draft logic model that was developed provided us with a clearer picture of what the program would be, what evaluation questions we could ask and what data we would collect.

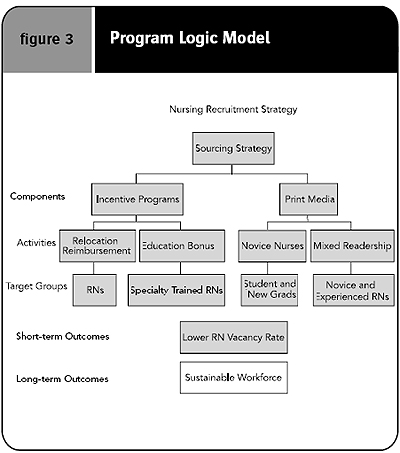

Our next step was to develop a draft of our RN Sourcing Strategy to present to a larger stakeholder group for feedback. After the feedback was obtained, the logic model was updated and became the working document to guide the annual program. Figure 3 is part of the program logic model we developed.

Program logic models are used as evaluation plans. It is important to ask both formative and summative evaluation questions. Formative questions provided information to improve the program activities and short-term objectives. For our purposes, examples include how many resumés were generated from an advertisement in a journal. Summative questions demonstrate the impact of the program based on the results obtained and include, for example, the number of external hires and the vacancy rate, to name two. The evaluation should be considered from several vantage points: the context (how the program functions), the implementation (the extent to which the activities were executed as planned) and the outcomes (the extent to which the program generates intended results).

When the annual evaluation of our RN sourcing strategy is complete, an evaluation report is written and presented to the various stakeholder groups at open forums. Subsequent hard copies are distributed. The cycle then resumes with the development of next year's RN sourcing strategy, based on the evidence from the previous evaluations, our projected growth and retirements, research and environmental scan and input from our different stakeholder groups.

Conclusion

The use of an evidence-based evaluation model to guide both the strategy development and the evaluation process has strengthened our RN sourcing strategies. We have lowered our RN vacancy rate from 13.2% in 1999 to 1.9% in July 2003. We are currently running a RN vacancy rate under 5%, with the majority of the current vacancies resulting from the recent expansion of our critical care and surgical beds. We have obtained valuable data on where our successes have been and where we have had to make changes as we implement the program.

We will continue to use the program logic model and the worksheets developed by Porteous et al. to support our annual workforce planning initiatives, evaluate their success and report to our stakeholder groups.

About the Author(s)

Cheryl Anne Smith, RN, MScN, CNeph (C)

Corporate Coordinator Nursing Recruitment, Retention & Recognition

The Ottawa Hospital

For more information contact: Cheryl Anne Smith, The Ottawa Hospital, 1053 Carling Ave, Ottawa, K1Y 4E9, phone: 613-761-4466, fax: 613-761-4728, e-mail: casmith@ottawahospital.on.ca

Acknowledgment

Originally published in the Longwoods Case Study Library, www.longwoods.com/product.php?productid=17730&page=1

References

Bickman, L., ed. 1987. Using Program Theory in Evaluation, New Directions for Program Evaluation Theory (no.33). San Francisco, CA: Jossey-Bass.

Chen, H.T. and P.H. Rossi. 1980. "The Multi-Goal Theory-Driven Approach to Evaluation: A Model Linking Basic and Applied Social Science." Social Forces 59: 106-22.

Cooksy, L.J., P. Gill and P.A. Kelly. 2004. "The Program Logic Model as an Integrative Framework for a Mulitmethod Evaluation." Florida State University. Retrieved March 20, 2005. < http://www.hsrd.houston.med.va.gov/

AdamKelly/Logic.html >.

Cousins, J.B. and E. Whitmore. 1998. "Framing Participatory Evaluation." New Directions in Education 89: 8-23.

Love, A.J. 1991. Internal Evaluation. Newbury Park, CA: Sage Publications Inc.

Mayne, J. 2001. "Addressing Attribution Through Contribution Analysis: Using Performance Measures Sensibly." The Canadian Journal of Program Evaluation 16(1): 1-24.

Porteous, N.L., B.J. Sheldrick and P.J. Stewart. 1999. The Program Evaluation Tool Kit: A Blueprint for Public Health Management. Retrieved March 20, 2005. < http://ottawa.ca/city_services/grants/

toolkit/diagram_en.pdf >.

Porteous, N.L., B.J. Sheldrick and P.J. Stewart. 2002. "Introducing Program Teams to Logic Models: Facilitating the Learning Process." The Canadian Journal of Program Evaluation 17(3): 113-41.

Ryten, E. 2002. Planning for the Future: Nursing Human Resources Projection. Ottawa, ON: CNA.

Sonnichesen, R.C. 1988. "Advocacy Evaluation: A Model for Internal Evaluation Offices." Evaluation and Program Planning 11(2): 141-48.

Weiss, C.H. 1972. Evaluation Research: Methods of Assessing Program Effectiveness. Englewood Cliffs, NJ: Prentice-Hall.

Weiss, C.H. 1988. "If Program Decisions Hinged Only on Information: A Response to Patton." Evaluation Practice 9(3): 15-28.

W.K. Kellog Foundation. 1998. Evaluation Handbook. Battle Creek, MI: W.K. Kellog Foundation. Retrieved March 20, 2005. < http://www.wkkf.org >.

W.K. Kellog Foundation. 2001. Using Logic Models to Bring Together Planning, Evaluation & Action. Logic Model Development Guide. Battle Creek, MI: W.K Kellog Foundation. Retrieved March 20, 2005. < http://www.wkkf.org >.

Comments

Be the first to comment on this!

Personal Subscriber? Sign In

Note: Please enter a display name. Your email address will not be publically displayed